In the ten-plus years since Nvidia tied its strategy and

future onto AI, using the burgeoning technology as its North Star, co-founder

and chief executive officer Jensen Huang has repeatedly said that the premiere

GPU maker was no longer simply a hardware company, but a foundational entity in

the AI space. It was a message that he’s repeated this week at Nvidia’s GTC

2026 conference in San Jose, California.

For sure, there was been plenty of hardware talk during

Huang’s keynote address during the show’s opening day, including platforms from

Grace-Blackwell

NVL72 to Vera-Rubin

NVL72 to Nvidia’s plans

for the Groq Language Processing Units (LPUs), not to mention storage

systems and interconnects and the cloud business.

But the overall focus of both the talk and the conference

itself has been agentic AI, a market in which Nvidia is the dominant player, a

position that Huang intends to keep and is willing spend a lot of money to

ensure.

That entails not only having a hand in everything up and

down the stack, but also in what runs on the hardware. One of the key areas for

Nvidia is developing

and making available open source advanced AI foundation and physical AI models

that run on its infrastructure, a move that comes as the AI industry shifts its

emphasis from model training to inferencing.

Nvidia has been become more open with models since November 2023, when it

released its first Nemotron open model, born from the company’s broader

NeMo framework for building custom generative AI models.

As we have pointed out before in our analysis, Nvidia is

perhaps the only company that can afford to give away its models because it can

make its revenue stream back on AI systems. This may eventually be too costly

for Meta Platforms to give it away as Google, OpenAI, and Anthropic most

certainly do not.

The Nemotron family has expanded significantly in the

intervening years, with open AI models customized for particular industries

that Huang pointed to during his keynote. Among the nine listed are agentic AI,

financial services, healthcare, industrial, quantum,

and telecommunications. Nvidia’s open models hit on all of those industries,

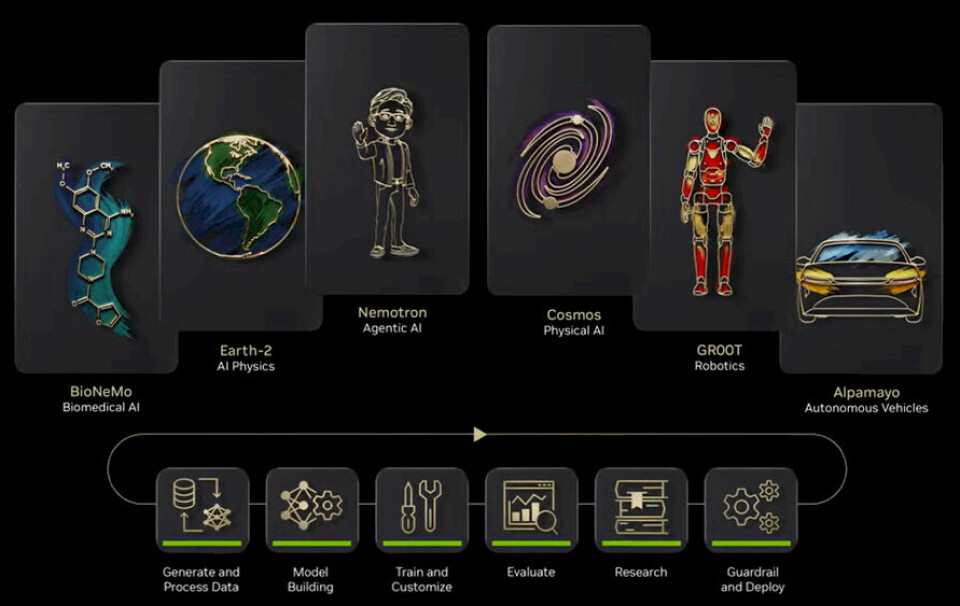

with examples being Cosmos for physical AI, Alpamayo for autonomous

vehicles, BioNeMo for biology, and Nemotron 3, the latest set of

multi-modal models for multi-agent systems.

“This is Nvidia’s open model initiative,” he said. “We are

now at the frontier of every single domain of AI models, whether it’s Nemotron,

Cosmos World Foundation Model, Groot, artificial general robotics – humanoid

robotics models – Alpamayo for autonomous vehicle, BioNemo for digital biology,

Earth2 for AI physics. We are at the frontier on every single one.”

The push into open models is important for Nvidia to

establish itself as something more than the hardware provider that unpins AI.

It becomes the model that runs on top of it, and Nvidia is pressing that

advantage with the open models, which cost significantly less than those

developed and owned by others.

It’s an effort that Huang and the rest of Nvidia believes in

so much that they’re investing $26 million into it over the next five years, a

figure reported

by Wired and confirmed by Nvidia.

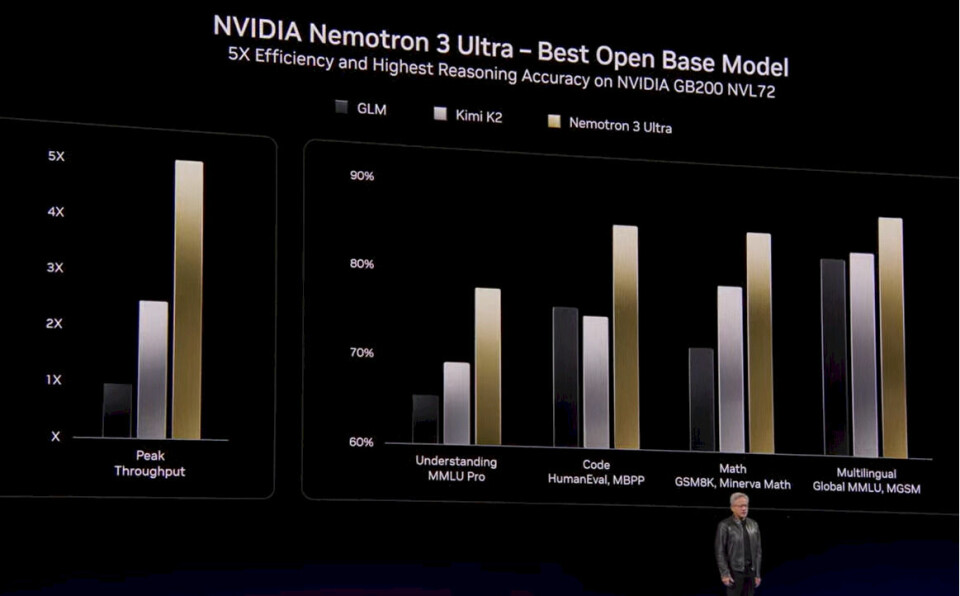

At the show, Nvidia expanded its family of Nemotron 3 open

models that it first introduced last year, including Nemotron 3 Ultra, which

leverages the vendor’s NVFP4 format on the Blackwell GPU platform aimed at

running such applications as coding assistants, search, and workflow

automation.

Other new Nemotron 3 models are Omni, which integrates

audio, vision, and language understanding to draw information from videos and

documents with greater efficiency and accuracy that similar offerings, and VoiceChat

to enable AI to listen and respond at the same time during real-time

conversations through a combination of speech recognition, large language model

(LLM) processing, and text-to-speech. There also are safety models for

detecting unsafe content in text and images and a retrieval pipeline to improve

the relevance and accuracy of the models’ outputs.

Also part

of the model barrage was NemoClaw, a reference model that puts security and

privacy guardrails and governance features into the widely popular OpenClaw

agentic personal assistant that is being rapidly adopted by businesses and

individuals alike but which has been dogged by security concerns. Now when

organizations want to bring OpenClaw into their environments – Huang said it’s

being used throughout Nvidia – they can use the NemoClaw model complete with

security features, the CEO said.

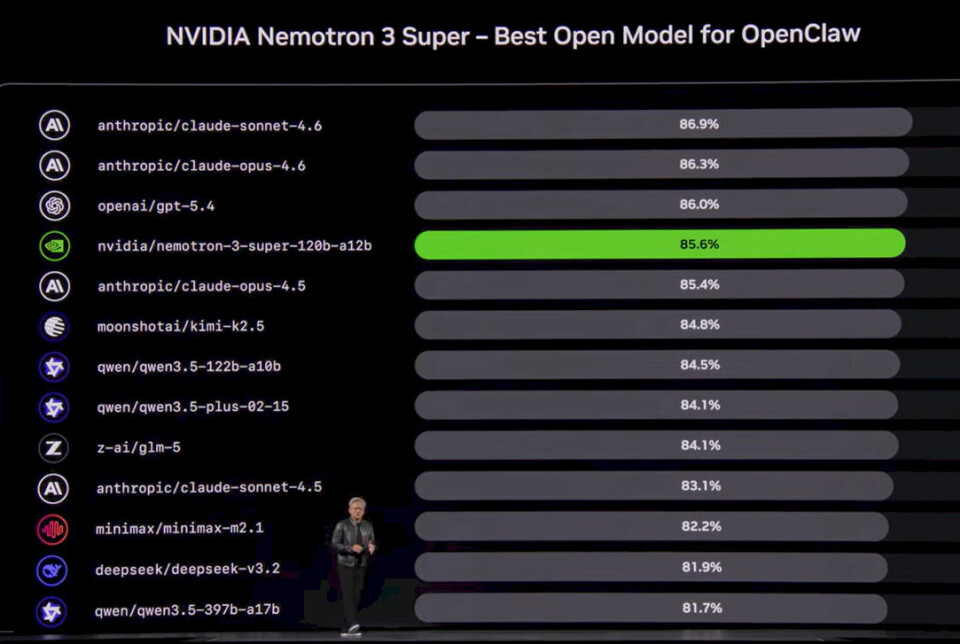

Days before the show opened, Nvidia released

Nemotron 3 Super, a model that has 12 billion active parameters and 120

billion in all that is designed to drive high compute efficiency and accuracy,

which are issues for multi-agent system that can generated up to

15 times the number of tokens of standard chats, creating what Nvidia

developers call a “context explosion” by resending history, tool outputs, and

reason steps that eventually causes the agents to drift from its original goal.

Nvidia developers addressed the typical tradeoffs when

balancing efficiency and accuracy through architectural changes, including

using a latent mixture-of-experts that call four times as many specialists for

the same inference costs by compressing tokens before they reach the experts

and multi-token prediction that forecasts multiple future tokens in one pass to

reduce the generation time for long sequences and allow for built-in

speculative decoding. They also integrated Mamba layers for sequence efficiency

with Transformer layers to precision reasoning to drive higher throughput with

four times the memory and compute efficiency.

The work on Nemotron 3 Super echoes back to Huang’s keynote

statement that Nvidia intends to keep working on the models, assuring

organizations that signing on to them is a good bet.

“Each and one of these, we’re going to continue to advance

these models – vertical integration, horizontal openness – so that we can

enable everybody to join the AI revolution,” he said. “We want to create the

foundation model so that all of you can fine-tune it and post-train it into

exactly the intelligence you need. Nemotron 3 Ultra is going to be the best

base model the world’s ever created. This allows us to help every country build

their sovereign AI, and we’re working with so many different companies out

there.”

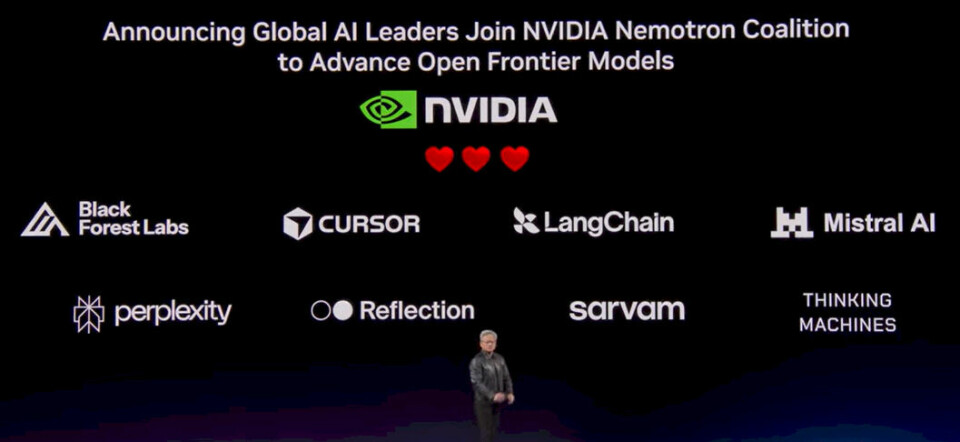

Some of those companies are part of Nvidia’s new Nemotron

Coalition – what Nvidia calls Nemotron 4 – which is bringing together model

builders and AI developers to advance the development of Nvidia frontier open

models through joint research, data, and compute. The first companies in are Black

Forest Labs, Cursor, LangChain, Mistral AI, Perplexity, Reflection AI, Sarvam,

and Thinking Machines Lab.

The coalition’s first project is a base open model that will

be co-developed by Mistral AI and Nvidia, and trained on Nvidia’s DGX Cloud,

with other group members adding data, evaluations, and domain expertise to

support post-training and ongoing development, according to Nvidia. The model

will be foundational to the upcoming Nemotron 4 models.

“We have invested billions of dollars of AI infrastructure

so that we could develop the core engines for AI that’s necessary for all the

libraries of inference and so on, but also to create the AI models to activate

every single industry in the world,” Huang said. “I have said that every single

enterprise company, every single software company in the world needs an agentic

system, needs an agent strategy. You need to have an OpenClaw strategy, and

they all agree and they’re all partnering with us to integrate Nemo, the NemoClaw

reference design, the Nvidia Agentic AI toolkit and, of course, all of our open

models.”

Editor’s Note: It is way uncool to call yourself Thinking

Machines. That company has a special

place in both the history of AI and supercomputing in the 1980s and 1990s,

and the only way this would even be possible is with the blessing of Danny Hillis. Tacking “Labs”

on it doesn’t make it OK.