The work developing and improving quantum processors

continues to accelerate as companies

big and small push to reach the point of fault-tolerant quantum systems. We

have written about a number of the advancements, such as breakthroughs over the

past year or more by Google,

Microsoft,

and Amazon

Web Services to create quantum chips that can address the issue of error

correction, a significant hurdle that needs to be cleared before commercial,

fault-tolerant quantum systems can become a reality.

More recent efforts have been undertaken in quantum

processor development. A group of scientists at the International Quantum

Academy in Shenzhen, China, this week published a paper in Nature

Nanotechnology unveiling

a superconductor-based quantum chip to address such issues as errors from

“environmental noise” like noise, light, and movement – which can lead to

errors – as well as the amount of resources needed when managing and encoding

logical qubits. The scientists addressed this by using dual-rail encoding that

uses two physical superconducting qubits to create a single logical qubit.

In a paper earlier this month published in Nature

Electronics, SEEQC showed how digital superconducting digital control

circuits can reliably

integrate with quantum chips at millikelvin temperatures, a step forward in

addressing the challenge of scaling superconducting quantum architectures.

Scientists with Bangledash, India-based QpiA this week said they

created

a scalable error correction system – a decoder – that uses a rotated

surface code architecture to allow for fast and scalable error correction. It

can operate in real time next to superconducting qubits and is another step on

the way to fault-tolerant quantum systems, according to the company.

The Challenge Of Simulating Quantum Materials

However, while quantum chip development is going at a rapid

pace, a question has been whether today’s quantum processors and their

relatively limited number of qubits can realistically simulate quantum

materials, a key goal in quantum computing that will drive the ability to do

what proponents say such systems need to do. This includes simulating the

structures of molecules, proteins, and catalysts for drug discovery, the

behavior of materials for better superconductors, and even their own error

correction codes to maintain qubit coherence – the ability to keep a fixed

phase relationship – in noisy environments.

To do that, they need to understand quantum behavior,

something that can’t always be done using classical computing methods.

A group of scientists from

IBM, the US Department of Energy-funded Quantum Science Center at Oak

Ridge National Laboratory, Purdue University, the University of Illinois at Urbana-Champaign, Los Alamos National Laboratory, and the University of

Tennessee showed that a superconducting 50-qubit IBM Heron r2 quantum processor

can simulate real magnetic materials that match the results of experiments run

on classical computers.

They wrote in a 49-page preprint paper on arXiv that this

means that quantum hardware available now, used with new algorithms and

quantum-centric supercomputing workloads, can simulate the properties of

materials, putting them on the path to being useful tools for scientific

discovery.

Advances in methods used by classical computers has allowed

them to accurately predict properties of quantum materials, the scientists

wrote, but that “strongly correlated systems with long-range entanglement and

complex dynamics remain beyond the reach of these approaches. Quantum computers

offer a potential alternative to addressing this.”

That’s because quantum systems align with the physical rules

of such materials, making them a natural fit for simulating quantum systems.

It’s something that physicist Richard Feynman proffered years ago.

However, while in quantum computing, the growing numbers of

qubits and improve gate fidelity have led to studies of static and dynamical

properties of many-body systems at scales beyond what classical systems can do,

the feeling has been that today’s quantum systems don’t have the necessary

capabilities – such as circuit depths and error rates – to simulate such

realistic materials, they wrote.

“It has therefore been unclear whether current, pre-fault

tolerant quantum computers can ever perform quantitatively reliable many-body

simulations of quantum materials that can be closely compared with laboratory

measurements,” the scientists wrote.

Running The Test

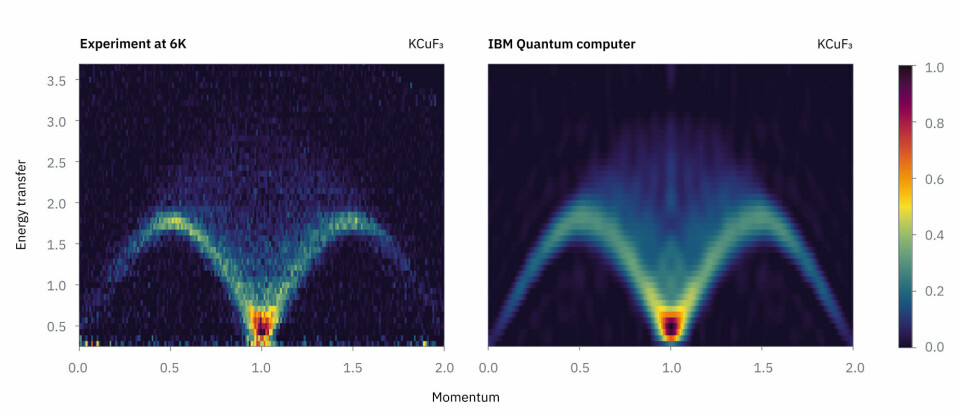

For the experiment, they targeted a sample of KCuF₃, a

magnetic compound whose measurements had been captured through neutron scatting

experiments to show the internal behavior of materials. Neutron scattering

works, but it’s difficult to compute classically, according to the scientists.

The work was run on the Heron processor, with the

experimental data gathered from neutron sources at the Spallation Neutron

Source at Oak Ridge (shown in the feature image at the top of this story) and

the UK’s Rutherford Appleton Laboratory. To reduce the circuit depth of the

quantum circuits, the scientists also used a noise-robust algorithm and

classical computing resources at the Illinois Campus Cluster.

The simulation of the KCuF₃ sample run on the IBM quantum

system (on the right, below) matched the results of the neutron scattering

experiments (left).

It falls in line with what IBM and other quantum vendors see

as the likely scenario of quantum computers working

with classical HPC systems in a hybrid-computing

fashion, with the quantum systems taking on workloads and equations that

are beyond the reach of their classical brethren.

The plan for the researchers moving forward is to use this

type of simulation with quantum materials that are more dimensioned and more

complex than KCuF₃.

The scientists wrote that their work demonstrates that “quantum

simulation of real materials on pre-fault-tolerant, programmable quantum

hardware is no longer an elusive goal. … These quantum simulations faithfully

capture key emergent quantum phenomena observed in real materials, including

the two-spinon continuum and the manner in which anisotropy and realistic

next-nearest-neighbor couplings reshape this continuum. Together, these results

establish that quantum computers are moving beyond proof-of-principle testbeds

and are beginning to function as practical scientific tools for the study of

quantum materials.”

A Roadmap For Hybrid ModSim And Quantum Systems

It also gives quantum computing vendors a roadmap for

assessing quantum simulations in increasingly complex settings. There

eventually will be a time in which quantum simulation shifts from a

benchmarking practice to a key tool for “condensed-matter physics,” allowing

scientists to use quantum devices to delve into areas that are beyond the reach

of classical simulation, they wrote.

“Looking ahead, as quantum devices scale to larger lattices,

support longer time evolutions, and address generic two-dimensional

non-integrable models, this framework positions quantum simulation as an engine

for constructing comprehensive equilibrium descriptions of quantum materials,”

the scientists wrote.

The quantum simulation work is the latest in a string of

quantum-related announcements by IBM this month. The vendor joined with The

University of Manchester, Oxford University, ETH Zurich, EPFL and the

University of Regensburg to create

a half-Möbius electronic topology in a single molecule – something never

seen before – and then used an IBM quantum system to determine why it worked, a

job that would be difficult for classical computers.

Earlier this week, IBM announced that in research run with

the Cleveland Clinic, they used a Heron quantum chip and a quantum-centric

supercomputing workflow to simulate

the electronic structure of a protein.