It was less than two years ago that Zapata Computing fell

apart and crashed. In 2017, the company spun out of Harvard University’s

quantum research lab with a focus on the top application layer of the quantum

stack. Zapata researchers did some of the foundational work in the space,

including into quantum intermediate representation (QIR) to essentially create

a bridge between quantum programming languages and hardware.

They developed a portfolio of dozens of patents – including

for QIR – and more than 40 scientific papers.

“Unfortunately, we were a little bit ahead of our time,”

Sumit Kapur tells The Next Platform, saying that in the later years of the last

decade, quantum systems hadn’t advanced much and it was unclear when true

commercial quantum computing would come onto the scene.

That’s when things got shaky for the startup. The industry

went into what Kapur, who came on as chief financial officer in 2024, called a

“bit of quantum winter,” and the company had to find alternative financing.

Executives accessed an AI-oriented special purpose acquisition company (SPAC)

for financing and Zapata transitioned to what he calls a “quantum-inspired AI” focus.

“That pivot didn’t leave us well situated,” Kapur says. “It

wasn’t really our forte, and so we didn’t have the strength there, we didn’t

have the differentiation there. We found ourselves mispivoted strategically in

order to access a SPAC that didn’t work.”

The company didn’t get the hoped-for money – “the SPAC was

supposed to bring in $100 million of equity capital. It brought in effectively

zero of equity capital, and what it did bring in is $20-plus millions of

liability” – and after several months of trying to dig out of the financial

hole, it shut down operations in October 2024.

The Comeback

That turned around a year later. Under Kapur’s leadership as

chief executive officer, the company re-emerged in September 2025 as Zapata

Quantum after filing for bankruptcy and creating a two-phase restructuring plan

that was completed in November that addressed more than $18 million in debt and

brought in millions of investment dollars from new backers. The company also

was able to protect the IP it built up over the previous years, including its

60-plus patents, and its building strong partnerships with top players in the

quantum space.

The mission remains the same, Kapur says.

“We’re going to advance the quantum economy, quantum era, by

focusing on the top level of the stack and accelerating application development,”

he says. “But what we realized is that the process, in order to connect use

cases to algorithms, is extraordinarily complex.”

Vendors have made significant strides on the hardware side.

For example, new processors Microsoft,

Google,

Amazon

Web Services, and IBM

are helping to address the error correction challenges, a key in accelerating

the development of commercial, fault-tolerant quantum systems. Meanwhile,

hyperscalers, chip

makers, and pure-plays alike are making advancements in quantum hardware.

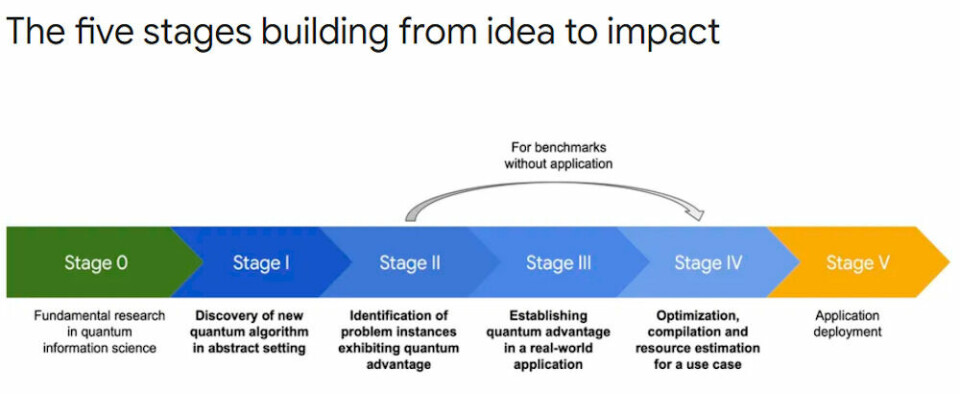

With hardware innovation accelerating, work on software

likewise needs to speed up. It’s a message Google scientists pushed in November

2025 by publishing a research paper – The

Grand Challenge of Quantum Applications – in which they proposed a

five-stage framework.

It is an area that hasn’t gotten the attention it needs, the

scientists argue.

“When it comes to the hard work required to uncover and

substantiate truly promising quantum applications, we believe the quantum

industry appears to face a classic collective action problem, leading to

systemic under-investment in high quality work in this area,” they wrote. “While

the necessity of new applications is widely acknowledged, prevailing wisdom is

that the competitive advantage of major quantum efforts lies primarily in

hardware.”

As Ryan Babbush, director of research for quantum algorithms

and applications at Google put

it, “building a fault-tolerant quantum computer is a grand challenge of

hardware. Using it is a grand challenge of applications.”

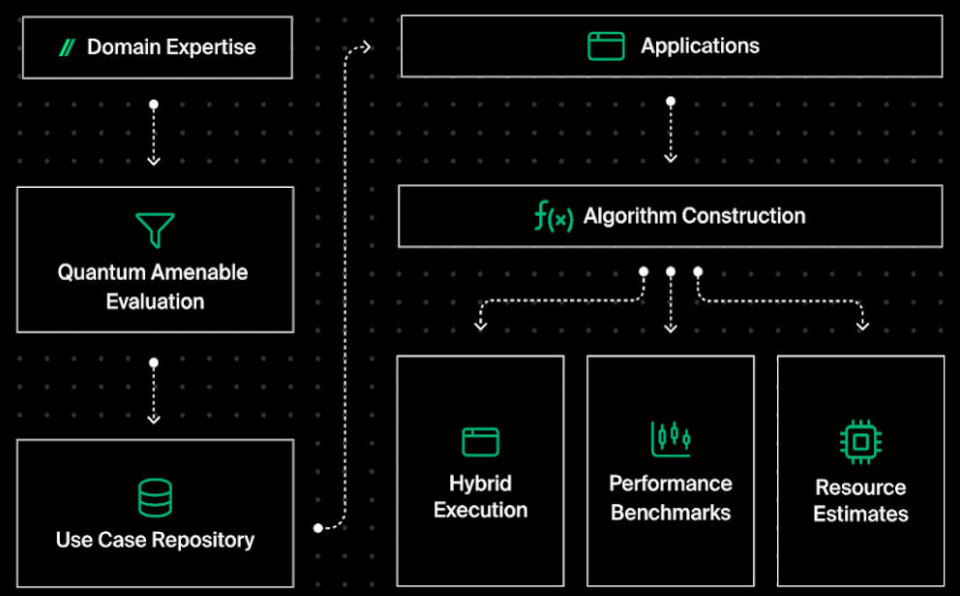

The revived company comes with its Orquesta Platform

(below), a unified software stack for accelerating the development of quantum

applications. It includes the vendor’s Quantum Graph database of use cases,

Quantum Pilot AI assistant, and QuantumOps and Bench-Q spec.

Zapata quantum platform

It took another key step in its strategy last month, when

its patent for QIR, a core component of its larger IP strategy for pushing the

building blocks for a hybrid classical computing and quantum software stack,

was granted in Canada, Europe, Israel, and Australia. It already was granted in

the United States.

Working With QIR

In its previous iteration, Zapata filed the QIR patent after

seeing a challenge when developing quantum applications and algorithms,

according to Jonathan Olson, the company’s strategic advisor for IP.

“Every time you wanted to have some sort of software

front-end connecting with a hardware back-end, you’d have to write some kind of

translator or some kind API connecting those two things,” Olsen tells The

Next Platform. “This is a problem that had been largely solved in classical

computing with LLVM. With quantum, there’s a little bit more complexity to it,

and that was the sort of complexity that we solved. QIR was clearly a sort of

valuable element that we knew had to become a part of the ecosystem at some

point, because otherwise it’s just an enormous amount of wasted effort if

everyone is writing an API every time they want to connect some software tool

to some hardware tool.”

The problem – again – for Zapata that the timing for

introducing QIR was off.

“It was too early in the sense that people weren’t ready to

adopt this sort of standardization, and in some sense that was totally

understandable,” he says. “Conversations around that is what actually drove the

creation of the QIR Alliance.”

The QIR Alliance was founded

in 2021 by The Linux Foundation, with a steering committee that

includes Microsoft, Nvidia, Quantum Circuits, Quantinuum, Rigetti, and Oak

Ridge National Laboratory – plus a lineup of projects.

Zapata has its QIR patent in hand, but “with us sort of

dipping out for the last year, there’s still some ongoing strategic discussions

as to how we want to utilize the patent,” Olsen says. “But it’s clear to us

that we are going to use all of our resources. I don’t want to speak as to

exactly what the use of those patents will be because sometimes that depends on

how other parties are engaging with us and how the space is evolving, whether

people are ready to start adopting that yet. I don’t know that we have an

extremely strong viewpoint on exactly when standardization needs to happen, but

we want to be positioned to take advantage of it when it occurs.”

More Projects Underway

That said, the company isn’t standing pat. Zapata is

partnering with the University of Maryland on a project that will use formal

verification-first model as a key part of building quantum applications and

ensuring correctness is maintain during the process. It’s something with a

established history in classical computing, but the Zapata-UMD partnership is

aimed at applying it to increasingly complex quantum algorithms.

Both Kapur and Olsen say a significant tool they have now

that they didn’t with the previous iteration of the company is AI, both

generative and agentic. Other vendors, like Quantum Elements, are using

AI in their quantum efforts. Kapur mentioned Zapata’s ongoing work with an

unnamed tech vendor to apply AI to the quantum space, particularly at the top

layers of the software stack, adding that “because of AI’s power, I’m as

optimistic as ever about our ability to progress.”

Olsen says AI is making another Zapata project easier. He

declines to get into details, but says it will “really fundamentally change the

way companies can understand the landscape and ecosystem of quantum

applications. That’s something that’s valuable even before we have quantum

advantage because there’s a lot of work that comes before quantum advantage and

getting organizations ready to move when quantum advantage arrives.”

The project comes out of work the vendor did within

a DARPA benchmarking program several years ago. The work at the time was

“arduous,” he says, but “then the stars sort of aligned with the development of

a lot of these new AI tools that actually enables us to scale this in a way

that was impossible two years ago.”