Agentic AI tools are being pushed into software development pipelines, IT networks and other business workflows. But using these tools can quickly turn into a supply chain nightmare for organizations, introducing untrusted or malicious content into their workstream that are then regularly treated as instructions by the underlying large language models powering the tools.

Researchers at Aikido said this week that they have discovered a new vulnerability that affects most major commercial AI coding apps, including Google Gemini, Claude Code, OpenAI’s Codex, as well as GitHub’s AI Inference tool.

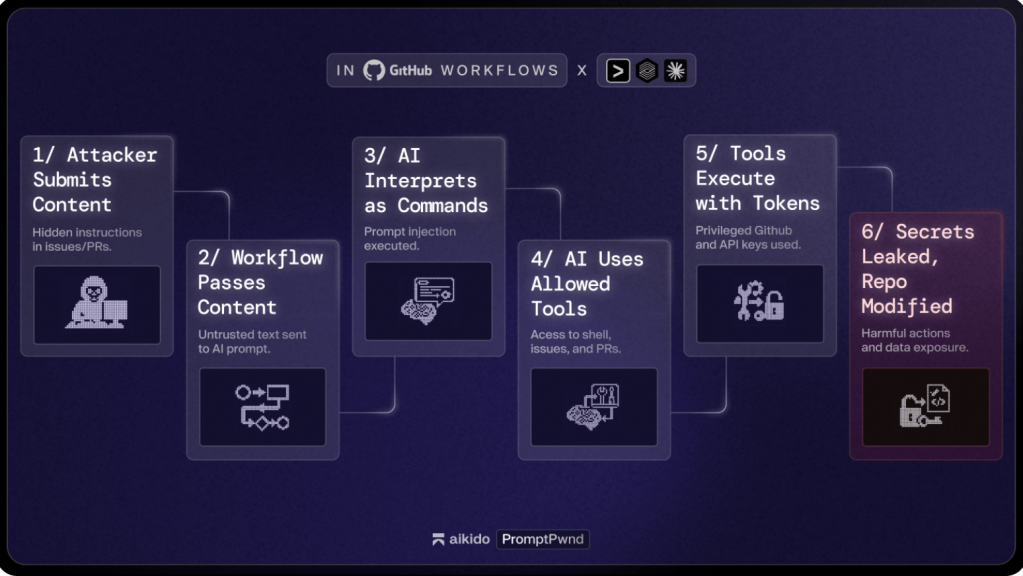

The flaw, which happens when AI tools are integrated into software development automation workflows like GitHub Actions and GitLab, allows maintainers (and in some cases external parties) to send prompts to an LLM that also contain commit messages, pull requests and other software development related commands. And because these messages were delivered as prompts, the underlying LLM will regularly remember them later and interpret them as straightforward instructions.

Although previous research has shown that agentic AI tools can use external data from the internet and other sources as prompting instructions, Aikido bug bounty hunter Rein Daelman claims this is the first evidence that the problem can affect real software development projects on platforms like GitHub.

“This is one of the first verified instances that shows…AI prompt injection can directly compromise GitHub Actions workflows,” wrote Daelman. It also “confirms the risk beyond theoretical discussion: This attack chain is practical, exploitable, and already present in real workflows.”

Because many of these models had high-level privileges within their GitHub repositories, they also had broad authority to act on those malicious instructions, including executing shell commands, editing issues or pull requests and publishing content on GitHub. While some projects only allowed trusted human maintainers to execute major tasks, others could be triggered by external users filing an issue.

Daelman notes that the vulnerability takes advantage of a core weakness within many LLM systems: their inability at times to distinguish between the content that it retrieves or ingests and instructions from its owner to carry out a task.

“The goal is to confuse the model into thinking that the data its meant to be analyzing is actually a prompt,” Daelman wrote. “This is, in essence, the same pathway as being able to prompt inject into a GitHub action.”

Daelman said Aikido reported the flaw to Google along with a proof of concept for how it could be exploited. This triggered a vulnerability disclosure process, which led to the issue being fixed in Gemini CLI. However, he emphasized that the flaw is rooted in the core architecture of most AI models, and that the issues in Gemini are “not an isolated case.”

While both Claude Code and OpenAI’s Codex require write permissions, Aikido published simple commands that they claim can override those default settings.

“This should be considered extremely dangerous. In our testing, if an attacker is able to trigger a workflow that uses this setting, it is almost always possible to leak a privileged [GitHub token], Daelman wrote about Claude. “Even if user input is not directly embedded into the prompt, but gathered by Claude itself using its available tools.”

The blog noted that Aikido is withholding some of its evidence as it continues to work with “many other Fortune 500 companies” to address the underlying vulnerability, Daelman said the company has observed similar issues in “many high-profile repositories.”

CyberScoop has contacted OpenAI, Anthropic and GitHub to request additional information and comments on Aikido’s research and findings.