There

will probably never be a better time for any AI-related company to go public

than between right now and next summer. The GenAI frenzy is at a fever pitch, and

the big four hyperscalers and cloud builders alone – Amazon Web Services,

Google Cloud, Meta Platforms, and Microsoft Azure – have collectively projected

for capital expenses to be somewhere between $695 billion and $725 billion in

2026. There is probably at least that much expected to be spent between the big

AI model builders (who are starting to build their own datacenters), hyperscalers

in China, plus sovereign AI centers, HPC centers, academic centers, and

governments who are also wanting to get in on the GenAI action.

GenAI

is a tactical and strategic weapon, both economically and militarily, and it is

also a cultural force that has the potential to do great things as well as

great harm to the established orders in these spheres.

Set

against this backdrop, the hyperscalers and cloud builders are designing their

own CPUs and XPUs or partnering with companies other than Nvidia and AMD to try

to get better bang for the buck for AI inference workloads – or just to get any

kind of matrix math compute at all.

It

would have been hard for Cerebras Systems to pick a better day to go public and

set the tone for the initial public offerings of Anthropic, OpenAI, and SpaceX,

the latter of which has absorbed the xAI model building business that probably

won’t be building Grok models in the future but is selling capacity on the

Colossus-1 supercomputer in Memphis to rival Anthropic.

It

is a classic “the enemy of my enemy is my friend” scenario, with no love lost

between OpenAI and Musk, one of its founding investors who correctly observes

that OpenAI was founded as a non-profit but then changed its mind. In the long

run, SpaceX will probably build foundation models, or maybe Tesla will. Elon

Musk is probably not done moving his pieces around the board, and it would not

be surprising to see SpaceX and Tesla merged into one giant conglomerate doing self-driving

cars, autonomous robots, and space launches, all of which need physical AI

models more than they need GenAI models. If the Musk conglomerate needs a GenAI

model, it can just use Anthropic’s Claude and be done with it, trading compute capacity

for model access much as Microsoft did for many years with OpenAI.

The

appetite for shares in Cerebras Systems, whose bankers sure did take their time

getting co-founder and chief executive officer Andrew Feldman to ring the bell

at the NASDAQ market, was huge, with an oversubscription of 25X for the 215.23

million shares that floated at $185 a pop, raising $5.55 billion for Cerebras.

At the end of the day, the Cerebras shares were worth $311 per share, giving

the public float of shares a market capitalization of $39.8 billion. If all of

the shares and warrants in the company were taken into account, the market

capitalization is about $95 billion.

That’s

not too shabby for a company that had a $23 billion valuation after a $1

billion Series H fund raising round back in February.

With

the IPO, Feldman’s 4.5 percent stake in Cerebras is worth $3.2 billion, while

chief technology officer Sean Li has a 2.4 percent stake worth $1.7 billion.

We

are not going to recap all of the financials for Cerebras, which we

drilled down into back in April when the company refiled an S-1 in preparation

for going public, something it had planned to do last year but put the

kibosh on it because it was able to raise money through addition funding

rounds. All told, including $1 billion from the Series H round, $1.3 billion in

cash and marketable securities, and $1 billion in working capital from its

$20 billion, 750 megawatt deal to install CS waferscale systems at OpenAI between

now 2028 plus another 3 gigawatts of gear in 2029 and 2030. With the $5.55

billion infusion from the IPO, it has $8.9 billion in cash and equivalents.

That is a good bit of money with which to build those systems for OpenAI as

well as Mohamed bin Zayed University of Artificial Intelligence and G42, the

two other big customers from the Middle East. The deal with Amazon Web Services

has yet to be fully fleshed out, but we think it will happen and there is an

outside chance that CS systems become the low latency inference boxes at AWS to

complement its homegrown Trainium systems. It would not be surprising to see

Anthropic ink a deal with Cerebras, too – and soon before Anthropic goes public

so it can show it has the iron it needs to do low latency AI inference.

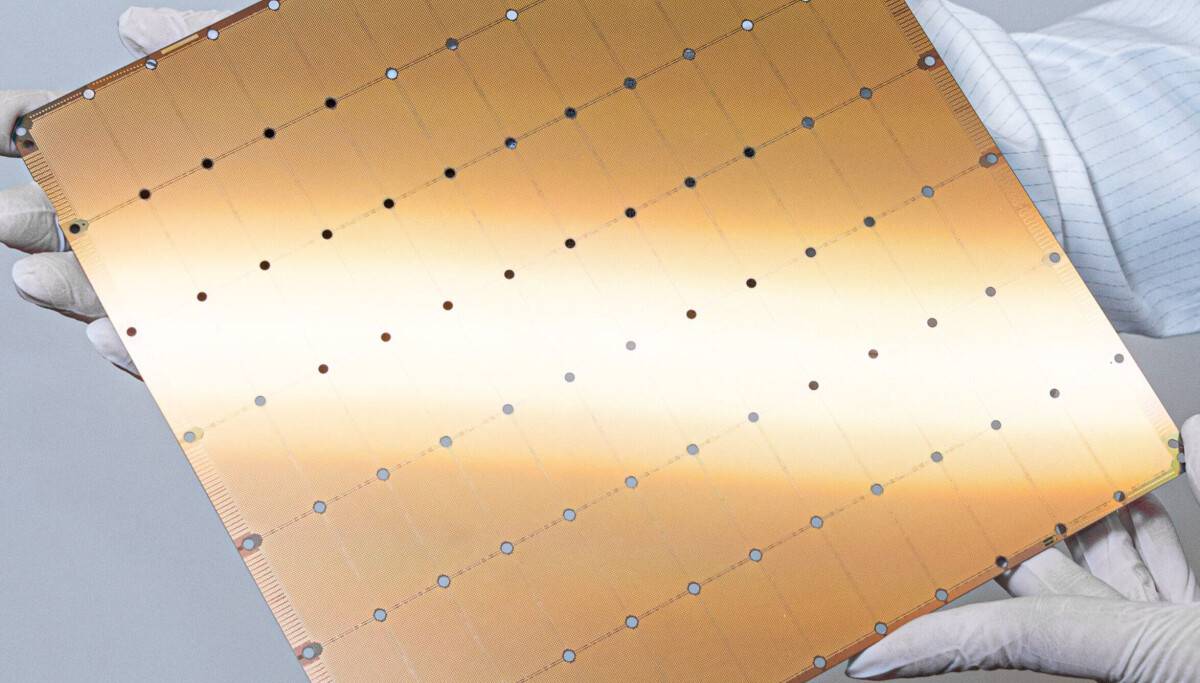

Here

is what is very important about that big pile of cash that Cerebras now has. It

is the successful innovator in waferscale chippery, and made something that several

companies had tried to do and failed at. But the silicon wafers are not getting

bigger at the same time that transistors are not getting dense enough fast enough,

and whatever density and performance that Taiwan Semiconductor Manufacturing Co,

Samsung, and Intel can bring to bear in their foundries, we are trapped on a

300 millimeter (12-inch) wafer and 450 millimeter (18-inch) wafers, an effort

that failed a decade ago, is not going to happen. And even if that did happen,

that would only get Cerebras another 50 percent more space to lay down compute

and SRAM.

We

think that with the WSE-4 waferscale chip, due perhaps later this year,

Cerebras is going to have to go 3D and innovate on the Z axis much as it has

done on the X and Y axes. When the low latency AI inference wars started in

earnest a little more than a year ago, Cerebras and Groq alike had to gang up

multiple machines together not for the compute, but because that was the only

way to get enough SRAM in a system to get the model weights in memory close to

the compute. At first, it was three CS-3 machines, then it was four, and then Cerebras

stopped talking about the number when it gave out test results. So did Groq.

What

is clear is that the compute to SRAM ratio on the WSE-3 waferscale processors

is wrong for low latency inference. There are two ways to fix this. Shrink the

process, cut back on the compute, and jack up the SRAM. It would be very

difficult, however, to get 3X to 4X more SRAM onto a 2D square cut out of a

12-inch wafer. You would then have to interconnect these wafers to scale out

the compute because there would be a lot less of it on each waferscale chip.

The

other option, which we have seen both AMD and Intel do with their CPUs and

GPUs, is to go vertical with the on-chip memory and stack it up. Stacked SRAM

on top of the base WSE-4 wafer could easily solve this problem and boost the

effective performance per WS engine such that an AI model may go back to

fitting on a single device again for reasonably sized and still useful models.

We think there is a high likelihood that the future WSE-4 will do at least

this.

We have higher hopes for innovation, of course. We

would like for the WS-4 to have optical links coming out of the wafer to shared

DRAM memory trays to significantly expand the MemoryX capacity of the CS-4

system, and make the memory have its own network (as is done with GPUs these

days with so-called scale up memory fabrics). Optical links using co-packaged

optics could also be used to implement SwarmX clustering, boosting bandwidth

between WSE devices significantly.